Microsoft Research held their first PhD Workshop which focused on new technologies for the cloud. The main themes of the workshop were optics and custom hardware. The workshop was spread over two days and included a keynote by Simon Peyton Jones on how to give a research talk, talks about three projects that are investigating various optical solutions (Silica, Sirius and Iris) and finally a talk on Honeycomb by Shane Fleming, replacing CPU’s in the database storage by custom hardware using FPGAs.

The workshop also included two poster sessions, and all the attendees presented the current projects that were being worked. These were also quite varied and included projects from various universities and also covered topics from optical circuits and storage to custom hardware and FPGAs. I presented our work on , our Verilog synthesis tool fuzzer.

I would like to thank Shane Fleming for inviting us to the workshop, and also thank all the organisers of the workshop.

* Microsoft projects

Four microsoft projects were presented, with the main themes being custom hardware and optical electronics.

** Honeycomb: Hardware Offloads for Distributed Systems

A big problem that large cloud infrastructures like azure suffer from is the time it takes to retrieve data from databases. At the moment, storage is setup by having many nodes with a CPU that accesses a data structure to retrieve the location of the data that it is looking for. The CPU then accesses the correct location in the hard drives, retrieves the data, and finally sends it to the correct destination.

General CPUs incur a Turing Tax, meaning that because they are so general, they become more expensive and less efficient than specialised hardware. It would therefore be much more efficient to have custom hardware that can walk the tree structure to find out the location of the data that it is trying to access, and then also access it.

The idea is to use FPGAs to catch and process the network packet requesting data, fetch the data from the correct location and then return it through the network. Using an FPGA means that each network layer can be customised and reworked for that specific use case. Even the data structure can be optimised for the FPGA so that it becomes extremely fast to traverse the data and find it’s location.

** Silica: Optical Storage

The second talk was on optical storage which is a possible solution for archiving data. Today, all storage is done using either magnetic storage (tapes and hard drives), discs or flash storage (SSDs).

Flash storage is extremely expensive and is therefore not ideal for archiving, instead, it is fast and often used as the main storage.

Discs can maybe only store 300GB and there are physical limitations which stop the storage from growing. However, as the storage is done by engraving the disc. Even there, the life time of the disc is not permanent, as the film on the disc becomes bluish over time.

To archive a lot of data for a long time, the current solution is to use magnetic storage, as it is very cheap. However, the problem with magnetic storage is that it inherently degrades with time, and therefore extra costs are incurred every few years when migrating the data to new discs.

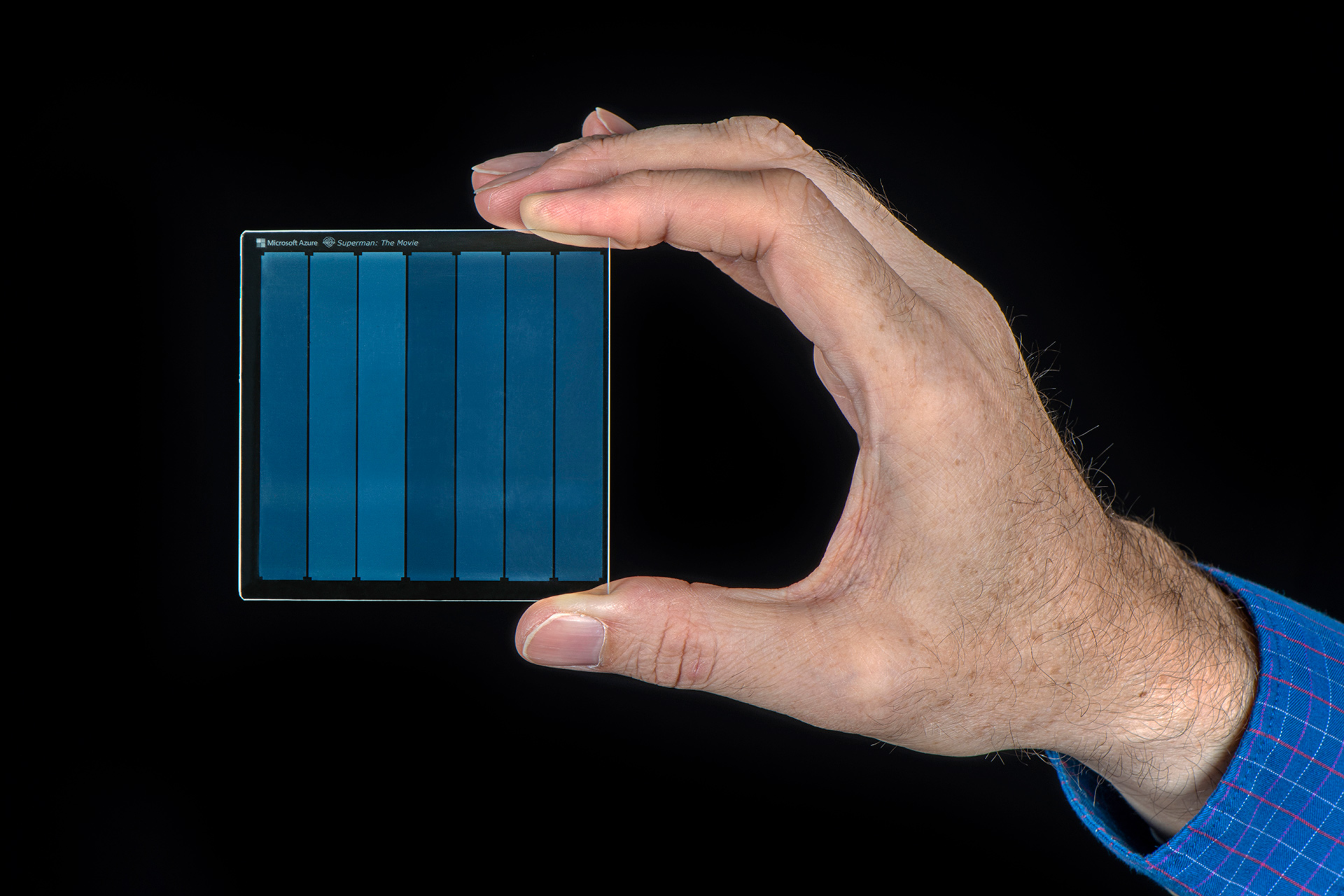

We therefore need a storage solution that will not degrade with time and is compact enough to store a lot of data. The answer to this is optical storage. Data is stored by creating tiny deformities in the glass using a femtosecond laser, which can then be read back using LEDs. The deformities have different properties such as angle and phase which dictate the current value at that location.

Figure 1: Project silica: image of 1978 “Superman” movie encoded on silica glass. Photo by Jonathan Banks for Microsoft.

** Sirius: Optical Data Center Networks

With the increased use of fiber in data centers, the cost of switching using electronic switches increases dramatically, because the light needs to be converted to electricity first, and then converted back after the switch. This incurs a large latency in the network because that process takes a long time.

Instead, one can switch on the incoming light directly using optical switches, which reduce the switching latency to a few nanoseconds. This makes it possible to have fully optical data-centers.

** Iris: Optical Regional Networks

Finally, project Iris explores how regional and wide area cloud networks could be designed to work better with the growing network traffic.

* How to Give a Research Talk

Simon Peyton Jones gave the first talk which was extremely entertaining and insightful. He really demonstrated how to give a good talk, and at the same time gave great advice on how to give a good talk, with tips that applied to conference talks as well as broader talks. The slides used Comic Sans but that only showed how good a talk can if one doesn’t mind that.

The main things that should be included in a talk are:

- Motivation of the work (20%).

- Key idea (80%).

- There is no 3.

The purpose of the motivation is to wake people up so that they can decide if they would be interested in the talk. Most people will only give you two minutes before they open their phone and look at emails, so it is your job as the speaker to captivate them before they do that. Following this main structure, his advice is that introductions and acknowledgements should not come at the start of the talk, but should only come at the end of the talk. If the introductions are important, these could also come after the main motivation.

The rest of the talk should be on the key idea of the project, and it should on explaining the idea deeply to really satisfy the audience. Talks that only briefly touch on many areas often leaves the audience wanting more. However, this does not mean that the talk should be full of technical detail and slides containing complex and abstract formulas. Instead, examples should be given wherever possible, as it is much easier to convey the intuition behind the more general formulas. Examples also allow you to show edge cases which may show the audience why the general case is maybe not as straightforward as they are thinking.